Modeling Imperfections Boosts Microscope Precision

Just when you thought optical microscopes couldn’t get any better, they just did. A research team has now shown that, by fitting microscope images to a mathematical model of the instrument, they can improve the precision of position measurements by between 10 and 100 times. Applied to electron microscopy, this would offer a precision of about meters—a thousandth of the length of a chemical bond.

Advances in optical microscopy have enabled “superresolution” images that show details down to the nanometer scale, better than the classical “diffraction limit,” which is about a quarter of the imaging wavelength. But big improvements could be achieved even in conventional microscopy by using better methods of analyzing the images, say Brian Leahy and his colleagues from Cornell University in Ithaca, New York.

The precision in any imaging method is ultimately limited by statistical noise due to random fluctuations in the sample and apparatus. This limit corresponds to the so-called Cramér-Rao bound (CRB). “Any calculation you do based on the data will always have an uncertainty of at least the CRB,” says Leahy.

By studying the mathematical formulation of the CRB, Leahy and his colleagues realized that current methods of image analysis do much worse than the CRB because they don’t make use of all of the information in the image. For example, in fluorescence imaging using light-emitting dyes attached to a sample, the light from each dye molecule spreads out over many pixels, blurring the “ideal” image. This blurring reduces the precision of any measurement of an object’s boundaries and location.

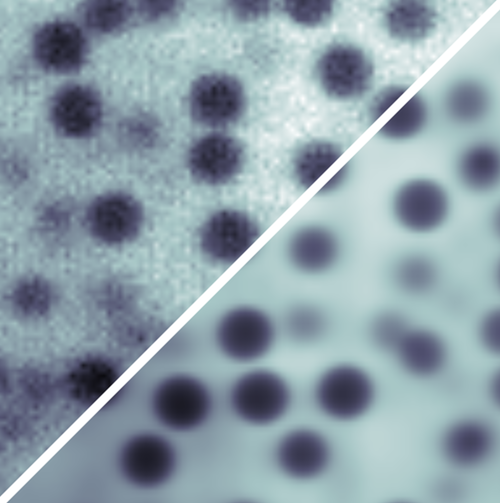

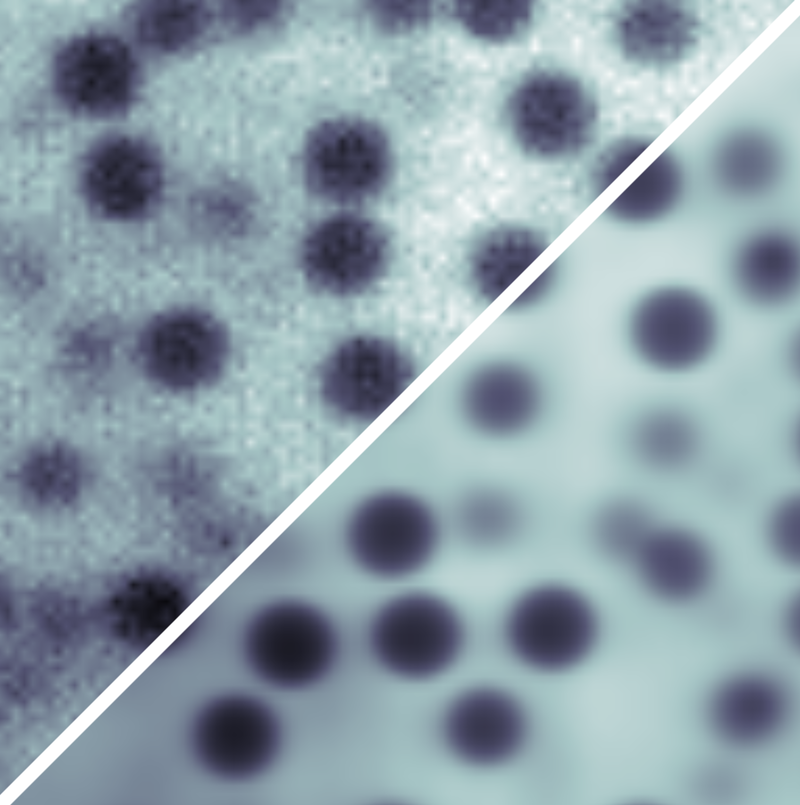

To overcome this imprecision, the researchers developed a model of the image-formation process in which light interacts with the sample and with the microscope optics. This model incorporates representations of, for example, the uneven distribution of fluorescent dyes and of the uneven laser illumination. Then by fitting the model parameters to the experimental data, each source of noise in the final image can be accounted for as accurately as possible, so that no useful information goes to waste. The researchers call their method parameter extraction from reconstructed images (PERI).

Applying PERI to imaging of 1.3-micrometer-diameter spheres in a water-glycerol mixture, the Cornell team measured the radius and position of the particles to within 3 nanometers, even though individual pixels were 125 nanometers across. With this information for a collection of about 1200 particles, they reconstructed the distribution of interparticle separations and, in turn, mapped out the (repulsive) interparticle force with nanometer-scale precision.

What makes this technique possible now is computer power, not any great leap in understanding, says Leahy. Thanks to good computer hardware, “we’re breaking with the qualitative tradition in microscopy analysis so as to describe images with a complete, bottom-up approach.” He says that the technique will be useful for situations where the macroscopic properties depend sensitively on interparticle separations, such as in colloidal glasses or gels.

“This is really good stuff,” says condensed-matter physicist David Grier of New York University. “It solves a problem that other imaging techniques cannot solve and offers exquisite precision despite the samples’ extreme complexity. I don't believe that any other technique offers so much information for this type of system.”

However, Grier and soft matter physicist David Weitz of Harvard University think that PERI will be limited by the assumption that the sample is made exclusively of spherical objects—or at least that their shape needs to be known in advance. But Leahy doesn’t see any problem, in principle, with applying the approach to systems with more complex shapes or with other intricacies, such as dye concentrations that vary across the sample. “All of these can be modeled,” he says, although he admits that there may be limits to the complications that PERI can embrace. “Is it possible to model all the organelles and structure of a cell? I think we'll just have to wait and see.”

This research is published in Physical Review X.

–Philip Ball

Philip Ball is a freelance science writer in London. His latest book is How Life Works (Picador, 2024).