Meetings: APS April Meeting—Cosmologists Can’t Agree on the Hubble Constant

The Hubble constant H0 tells us the speed at which galaxies are receding from us as the Universe expands. Over the past five years, cosmologists have recognized that there is a discrepancy between different measurements of this fundamental parameter. Three speakers in a session at the April Meeting of the American Physical Society in Columbus, Ohio, discussed the status of this “crisis in cosmology.” The field has now accepted that the problem is real, and some researchers are optimistic that it could lead to important discoveries.

The problem began in 2013, when the first results were reported from the Planck satellite, which had measured the cosmic microwave background (CMB). The Planck team’s value for H0 was 67.3±1.2 kilometers per second per megaparsec (km/s/Mpc), lower than previous measurements, which had been between 70 and 75 km/s/Mpc. The result also had error bars small enough that even this slight difference was a potential problem. Planck’s 2015 result was not very different, though it came with even smaller error bars.

Prior to the Planck announcement, the Supernova H0 for the Equation of State (SH0ES) Collaboration, led by Adam Riess of Johns Hopkins University in Baltimore, had already set out to make a measurement of H0 in our cosmic neighborhood with higher precision than previous efforts. The researchers focused on re-calibrating three of the standard distance-measuring techniques from scratch—the motion of stars due to Earth’s orbit (parallax), pulsating stars known as Cepheid variables, and Type Ia supernovae, said team member David Jones of the University of California at Santa Cruz. Based on their improved distance measures, SH0ES reported an H0 value of 73.2 ± 1.7 km/s/Mpc in 2016. This result differed from Planck’s by more than 3 standard deviations, a highly statistically significant difference that could not easily be explained.

Re-analyses of the SH0ES results confirmed the 2016 finding, as did additional measurements of H0 in the local Universe. But an independent H0 determination in 2016 based on so-called baryon acoustic oscillations—the sloshing of matter in the early Universe that produced the characteristic CMB patterns—lined up with the Planck result.

Stephen Feeney of the Flatiron Institute in New York said that despite quite a bit of attention to the issue, no one has found any problems with the measurements that could have a large enough effect to close the gap. Cosmologists have also been discussing whether the standard cosmological model, known as ΛCDM, may require modification. This theory is used for the CMB-based determinations of H0. But the proposed adjustments to ΛCDM all introduce at least some conflicts with other types of data. Feeney estimates that the odds are 60:1 that all of the data could be explained by statistical flukes and ΛCDM alone.

Last year, the Planck team performed a more detailed analysis of their data and found that the CMB fluctuations on the smallest angular scales had the largest effect on lowering H0. Describing these results, Bradford Benson of Fermilab said that when the team used only their data from larger angular scales (above about 0.2°), they derived an H0 value consistent with the SH0ES result.

According to Benson, the smaller angular scales provide a more sensitive test of a particular parameter in ΛCDM than larger scales. The parameter is the density of neutrinos in the Universe, which should be proportional to the number of neutrino species (there are three in the standard model of particle physics). Increasing the number of neutrino types is one of the few ways to reasonably tweak ΛCDM and increase Planck’s H0 enough to close the gap with local measurements. However, this solution would also require more massive neutrinos to avoid disagreements with other cosmological data sets, said Benson. And of course, there isn’t much evidence for a fourth neutrino type.

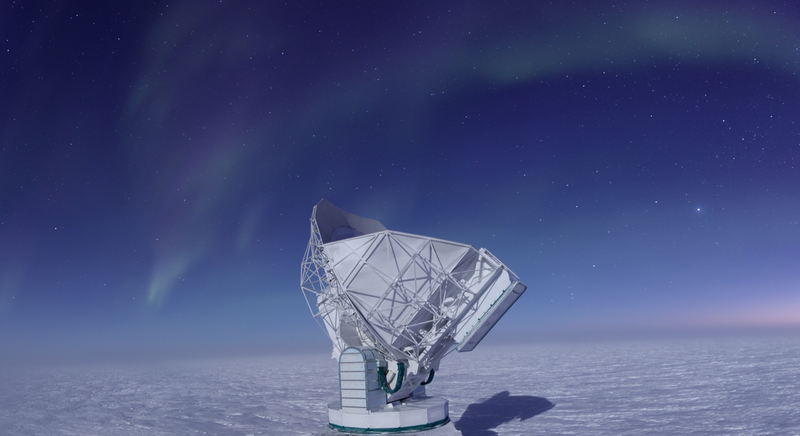

Jones, Feeney, and Benson agreed that the discrepancy isn’t going away and that more data are essential to explain it. The expected future trove of gravitational waves from binary neutron star mergers, for example, will provide independent estimates for H0. (Last year’s event led to a value somewhere between those of Planck and SH0ES but with much larger error bars.) In addition, the South Pole Telescope and the Atacama Cosmology Telescope have upgraded equipment that will soon provide better CMB maps, and the Gaia satellite will provide a new level of precision parallax measurements.

Benson thinks there’s a good chance that a “benign” explanation will solve the problem. However, in a different session, Riess pointed out that problems with the value of H0 have led to great discoveries in the past, including the existence of dark energy.

–David Ehrenstein

David Ehrenstein is the Focus Editor for Physics.