Quantum Correlations Generate an Optical Lattice

Beams of light with phase-structured wave fronts provide a robust, high-dimensional medium for metrology and communication applications (see Synopsis: Twisting Light Beams on Demand). Optical lattices formed from single-photon versions of those structured beams have attracted attention as a tool for quantum-memory devices. However, such lattices have so far only been generated using classical light. Now, taking the phenomenon to the quantum regime, Andrew Cameron and his colleagues at the University of Waterloo, Canada, demonstrate a protocol for creating optical lattices from entangled photon pairs [1].

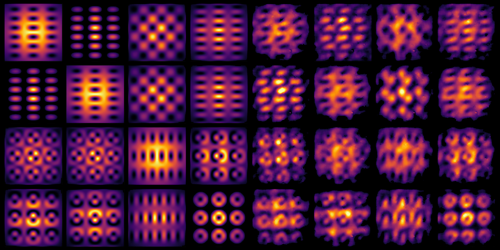

Using a laser-pumped nonlinear crystal, Cameron and his colleagues generated pairs of indistinguishable, polarization-entangled photons that propagated along different directions. One photon from each pair passed through a set of prisms that manipulated its orbital angular momentum, transforming the photon’s spatial profile in a way that depended on its polarization state. As a result, the polarization of the other, untransformed photon became entangled with the spatial profile of the transformed one. Both photons were detected by a photon-counting camera, which recorded each photon’s spatial profile as a 2D, periodically structured intensity pattern. Then, a novel pixel-by-pixel tomography process allowed the researchers to quantify the degree of correlation between each photon’s spatial profile. The experiments and corresponding theoretical calculations showed strong correlation between the photons in each pair, indicating entanglement.

Control over such entangled structured photons could provide a method for quantum sensing and manipulation of periodic structures. In future work, the team plans to generate optical lattices using more prisms, thereby expanding the number of obtainable orbital angular momentum modes and enlarging the “alphabet” available for quantum communication protocols.

–Rachel Berkowitz

Rachel Berkowitz is a Corresponding Editor for Physics Magazine based in Vancouver, Canada.

References

- A. R. Cameron et al., “Remote state preparation of single-photon orbital-angular-momentum lattices,” Phys. Rev. A 104, L051701 (2021).