Snapshots from the April Meeting—Pinning Down the Universe’s Rate of Expansion, Particle Physics’ Gathering Storm, and More

Pinning Down the Universe’s Rate of Expansion

In 1998, observations of supernovae led to the remarkable conclusion that the Universe’s expansion is accelerating—a finding most often explained by a yet-to-be-deciphered form of dark energy. In a talk at a session on cosmology, Andreu Font-Ribera from Lawrence Berkeley National Lab reported on the most precise measurement of that expansion to date, carried out by the Baryon Oscillation Spectroscopic Survey (BOSS). And the new result pushes our knowledge further back in time: we now know that 10.8 billion years ago, the Universe was expanding by 1% every 44 million years.

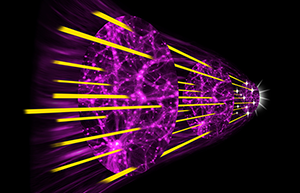

The BOSS analysis relies on data from 140,000 quasars collected by a 2.5-meter telescope at Apache Point, New Mexico. BOSS researchers have measured the expansion of the Universe by mapping the redshifts of light passing through intergalactic hydrogen clouds. These clouds have been imprinted with so-called baryon acoustic oscillations—sound waves created in the exploding plasma of the early Universe. Light from extremely distant but very bright quasars passes through the hydrogen clouds, which have high- and low-density regions caused by the baryon oscillations. The clouds are moving away from the quasars with the expansion of the Universe, so there are redshifts in the spectral absorption lines that yield information on the rate of expansion. And because the baryon oscillations are peaks and troughs of density, researchers can tie a given redshift to a particular position.

The team used two complementary techniques to get the uncertainty in the measured expansion rate down to 2%. One method, autocorrelation, compared the absorption in nearby quasar spectra. The other, cross-correlation, analyzes the amount of absorption as a function of separation from a quasar. As the analysis of the data set continues, researchers hope to be able to understand better the nature of the dark energy that is causing the accelerating expansion.

Particle Physics’ Gathering Storm

The field of particle physics may be in serious trouble very soon, said Maria Spiropulu of Caltech and the CMS collaboration in her Plenary session talk about the Higgs boson. As she explained later, an extension of the standard model of particle physics involving supersymmetry is widely believed to be the solution to several important problems, such as the instability of the Higgs boson and the arbitrariness of the masses of fundamental particles. But the most popular supersymmetric theories predict new particles not much heavier than the Higgs, and they ought to have been seen by now at the Large Hadron Collider (LHC). LHC physicists have been hunting for such particles for years and have ruled out almost all of the predicted mass range for such theories.

At the same time, there is a large consensus that dark matter is likely to be made of supersymmetric particles that should be seen soon in so-called direct detection experiments, which have been running for several years. Supersymmetry and dark matter have become so important to particle physicists that “we have cornered ourselves experimentally,” said Spiropulu. If neither is detected in the next few years, radical new ideas will be required. Spiropulu compared the situation to the era before 1905, when the concept of ether as the medium for all electromagnetic waves could not be verified. The field was in a state of confusion until Einstein’s radical theory overturned several formerly sacred principles. “I think a [similar] revolution is coming,” she said. “It’s the best of times for solving big puzzles.”

Moore’s Law and Clean Energy Technologies

Industries producing energy efficient devices can learn some lessons from the computer chip industry, according to Robert Van Buskirk of Lawrence Berkeley National Lab, who spoke at a session on energy efficiency. The number of transistors on an integrated circuit has doubled roughly every two years for decades, a rule known as Moore’s Law. But energy efficient devices have a much slower improvement rate—refrigerators and lighting, for example, have halved their energy consumption only every 20 to 25 years.

Van Buskirk showed results from a simulation of the process of improving the energy efficiency of a device. He made some basic assumptions about the random set of all possible changes to a device, each of which can help or hurt its energy efficiency. He found that the pace of improvements depends most sensitively on the degree of creativity of the engineers—represented in his model by the width of a Gaussian distribution of all possible changes one could make to the device. This result suggests that companies and policy makers should encourage as wide a range of approaches as possible to the challenges of increasing energy efficiency, Van Buskirk said.

In a separate analysis, Van Buskirk looked at decades’ worth of sales and efficiency data for a range of products including washing machines, batteries, and air conditioning systems. He developed an equation that predicts a Moore’s Law for energy efficiency and also suggests where future investments are likely to be most effective at increasing efficiency. His analysis recommends investing in energy savings over the long-term (rather than in cheaper products for shorter-term gain) and choosing markets where the price of energy is high. One example is long-lived, off-grid solar electricity technology, which would help poor countries that lack electric power infrastructure.

– David Ehrenstein and David Voss