Robust Networks

As man-made networks, from Facebook to the power grid, are increasingly gaining importance, it is crucial that we construct them to be as reliable as possible. In a paper in Physical Review Letters, Tiago Peixoto and Stefan Bornholdt at the University of Bremen, Germany, show how we could build a large-scale network that stands up best to random failure or intentional attacks.

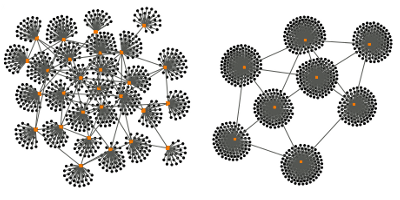

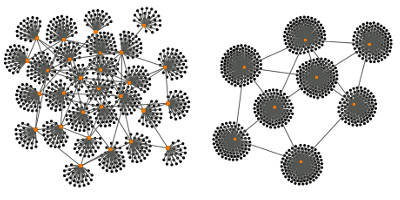

The authors analyze the conditions under which, in a highly interdependent network, a problem in a small section could expand to the entire network and lead to widespread failure. To do this, they borrow the tools of percolation theory (which describes the movement of liquids through porous media) and use it to develop a model that describes networks as ensembles of discrete “blocks” of connected and interdependent nodes. With this model, they determine the network topologies most robust against random failure (which can occur at any node) and those that are robust against targeted attacks (which are directed at the most connected nodes).

The research shows that networks with a highly linked core connected to a periphery are most robust to random failures. This may explain why similar core-periphery topologies have emerged in many real systems, from the internet to gene-regulation networks. Instead, randomly connected, noncentralized topologies turn out to be the best protection against targeted attacks. – Sami Mitra