Longer Movies at Four Trillion Frames per Second

Generating images at a rate of more than a trillion per second, today's fastest cameras can catch molecules as they react with one another. But despite this high rate, when observing nonluminous objects, they can only produce a handful of images in a single sequence. Engineers have now demonstrated a rate of nearly four trillion frames per second, capturing as many as 60 consecutive images. The technique should allow video analysis of ultrafast processes such as the interaction of light with eye tissue in laser surgery.

High-quality, fast cameras use semiconductor structures called CCD arrays to rapidly store image data before moving them off to longer-term storage. At the highest speeds, these cameras can only produce a handful of consecutive frames, mainly because of the limited CCD space. The images must be stored in nonoverlapping subregions of the CCD, so increasing the number of images leads to a reduction in image resolution. To overcome this limitation, researchers led by photonics specialist Feng Chen of the Xi’an Jiaotong University in China have now exploited a technique called compressive sampling, which allows the storage of images in overlapping CCD regions.

Their setup first sends a laser pulse containing a narrow range of frequencies through a system of lenses and a diffraction grating, which together stretch the pulse out into a “chirped pulse” of longer duration. This pulse has higher frequencies of light at its leading edge and lower frequencies trailing behind. In addition, in order to eventually form a 2D image, the pulse is widened in the directions perpendicular to its propagation.

Following standard techniques, a pulse with this frequency structure can be used to produce multiple frames in a video because there is a precise correspondence between the frequency of light and its position within the pulse, says Chen. If the pulse passes through some object, the scattered light can be assembled into a time-ordered video by using frequency to identify the image associated with each moment in time.

In the new imaging process, the chirped pulse interacts with an object of interest, and the scattered light then has imprinted upon it a random two-dimensional pattern before being focused onto a CCD camera. The still frames from this pulse are written into overlapping regions of the CCD array, but the two-dimensional pattern imprinted on each image makes it possible to recover the frames with appropriate image processing.

“The decoding scheme essentially un-mixes the overlapped images,” says team member Terence Wong, at the Hong Kong University of Science and Technology. “This way we can pack more images onto the same sensor.”

The team demonstrated the capabilities of their method by taking pictures of a short, intense pulse of light traveling within a transparent solid. Intense light alters the refractive index of this solid material, so as the imaging laser pulse moved through, it became distorted in a way that revealed the locations of the light pulse being imaged.

Using a single chirped laser pulse, the system could produce an image every 260 femtoseconds and could generate a 60-frame video, although only 40 frames were needed to capture the moving light pulse. In a separate experiment, the team made a 60-frame video showing a light pulse leaving the material and then being reflected back in (see video above); this video required 414 femtoseconds between frames in order to observe all of the action.

“The experiments demonstrate a remarkable imaging speed,” says computational imaging expert Jinyang Liang of the University of Quebec in Canada, who suggests that the technique will find immediate uses in optics and laser physics. “With further development, it might also be used as an advanced imaging tool to inspect biological samples in laser surgeries and imaging-based disease diagnosis.”

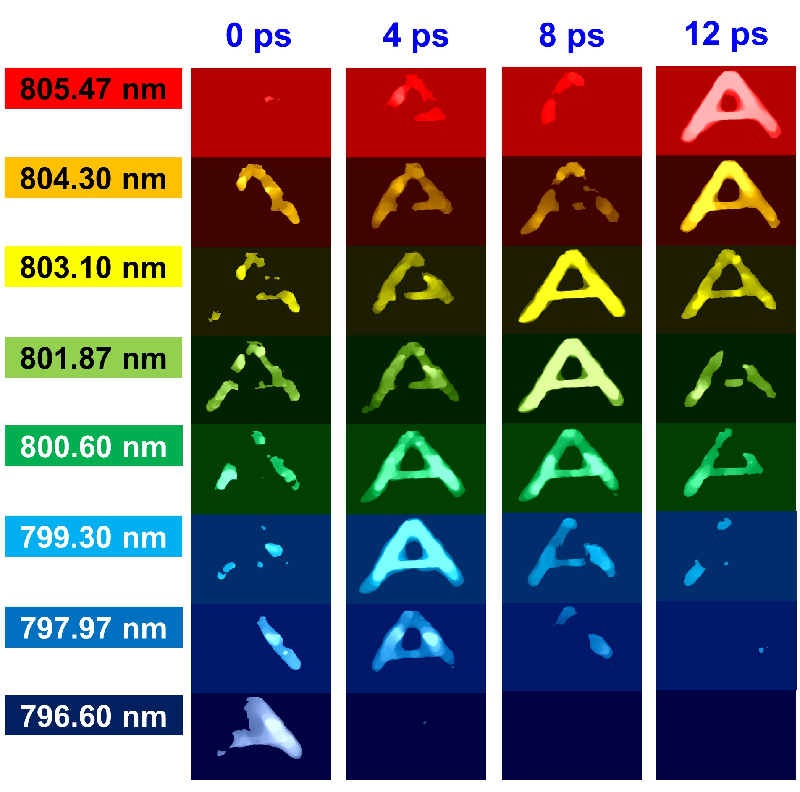

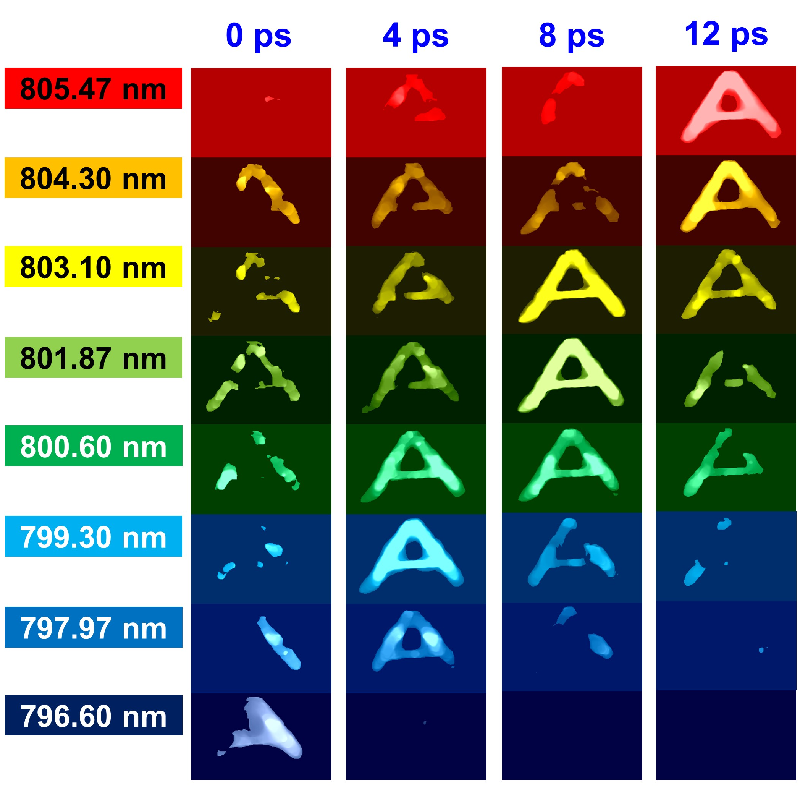

The team also produced a rapid series of images of the letter “A” filled with a dye. Each image covered a narrow range of wavelengths but shifted a bit with respect to the one before, resulting in an image and a spectrum of the object within 12 picoseconds.

Chen and colleagues suggest that the ability to take such quick spectral snapshots of objects will be particularly useful in studies of phenomena such as lattice vibrations in solids or interactions of intense laser pulses with plasma. Moreover, movies with many more than 60 frames are possible with further development, Chen says. “By using a broader spectrum light source, we can achieve a larger frame number without compromising the imaging speed.”

This research is published in Physical Review Letters.

–Mark Buchanan

Mark Buchanan is a freelance science writer who splits his time between Abergavenny, UK, and Notre Dame de Courson, France.