Novel Coronavirus Prompts Computer Sharing

One resource that’s not in short supply during the pandemic is computing power. For researchers working to combat COVID-19, access to these resources has recently gotten easier, thanks to a collective effort that unites the computing capabilities of 33 government labs, universities, and private companies in the US. On March 24th, the White House Office of Science and Technology Policy announced the COVID-19 High Performance Computing (HPC) Consortium. The program, which hopes to spur epidemiological modeling and the development of treatments and a vaccine, gives selected researchers free computing time on machines ranging from small clusters to some of the world’s largest supercomputers. “It’s sort of like a super user facility,” says Katherine Riley, Director of Science for the Argonne Leadership Computing Facility, Illinois, one of the HPC members.

Computational work will be instrumental in the global fight against COVID-19. Announcing the new consortium at a press conference, Paul Dabbar, Undersecretary for Science at the US Department of Energy (DOE), pointed to a recent study by a team of structural biologists at Oak Ridge National Laboratory (ORNL), Tennessee. The researchers had used molecular dynamics simulations to analyze 8000 small-molecule drug compounds from a chemical database, identifying 77 that warranted further investigation as potential drugs for attacking the novel coronavirus.

Researchers anywhere in the world can submit a proposal to use the HPC machines. A panel of scientists and computer specialists is reviewing the proposals and allocating computing time on a rolling basis. (As of today, 27 HPC projects are underway and more have been approved.) Although some of the member computing facilities have always been available to outside researchers, the new initiative provides access to public and private resources through one streamlined process.

Many of the projects will model the novel coronavirus, known as SARS-CoV-2, with molecular dynamics simulations. Scientists have previously used this popular computational tool to develop new treatments for HIV and cancer through established codes. They are now hoping to have similar success using the codes to determine the structure of the virus’ RNA and its surrounding protein shell, which will help modelers design a vaccine.

But simulating a complex molecule with molecular dynamics can take “vast quantities of time,” explains Irene Qualters, Associate Laboratory Director for Simulation and Computing at Los Alamos National Laboratory, New Mexico. That’s why many of the projects are incorporating artificial intelligence (AI) to guide the calculations. “AI is being used to target a simulation for a particular aspect of the virus.”

One such project is led by Arvind Ramanathan, a computational biologist at Argonne National Laboratory, Illinois. Combining molecular dynamics with AI tools, his team wants to identify small molecules that target the proteins that make up SARS-CoV-2 and to predict how these proteins rearrange in response to these molecules. This information will tell researchers which proteins are vulnerable to, and possibly inhibited by, certain drugs. “You’re throwing proteins and drugs at the virus to see how it responds,” Riley says.

Instead of sifting through known molecules, researchers on another project hope to land on the right drug by designing molecules from scratch. Stratos Davlos, the chief technology officer of the AI-focused company Innoplexus, says his group is using neural networks to design molecules that bind to and inhibit the relevant proteins on the virus. These molecules are expected to have higher potency and better drug-likeness characteristics than the known ones. And Ahmet Yildiz of the University of California, Berkeley, along with Mert Gur of Istanbul Technical University in Turkey, are performing molecular dynamics calculations on two supercomputers to explore how the infamous “spike” proteins on the surface of SARS-CoV-2 bind to host cells. Figuring out how to block the sites where those spikes attach could help researchers develop much-needed therapies.

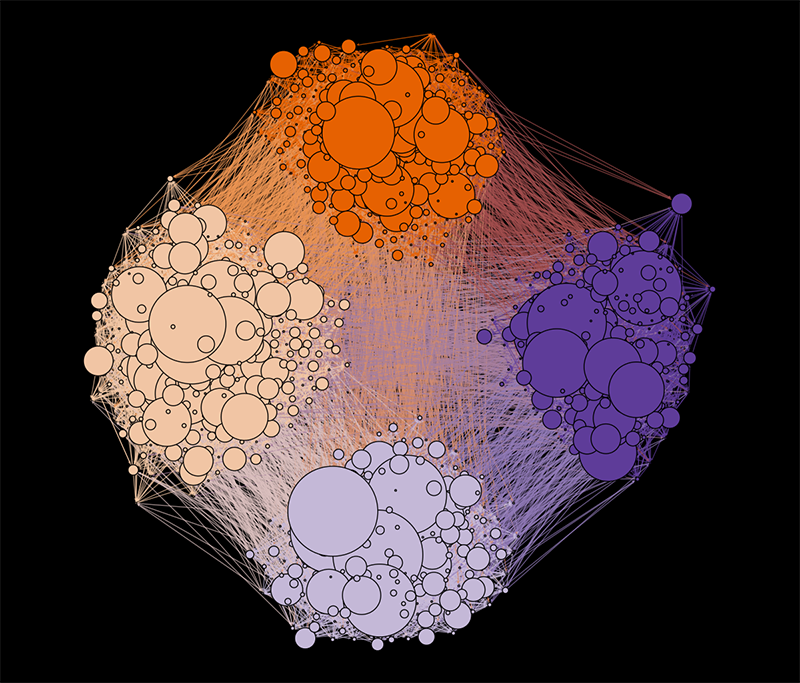

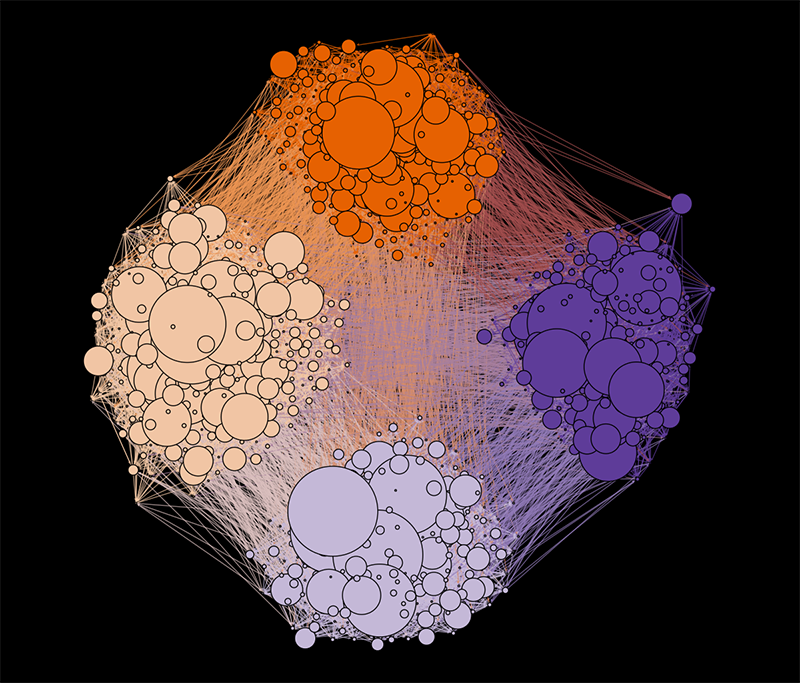

When a vaccine eventually exists, it will be in limited supply. Public health officials will therefore need to determine which individuals to vaccinate at a given location in order to stop the disease’s spread. For computer scientist Mahantesh Halappanavar and his colleagues at Pacific Northwest National Laboratory, Washington, as well as their collaborators at Washingon State University, the new consortium presented an opportunity to use their expertise to help solve this highly complex optimization problem. Prior to the outbreak, Halappanavar and his group had been working on one of the planet’s most powerful supercomputers—Summit, at ORNL—to develop an algorithm that would solve a related conundrum, known as the influence maximization problem. “Let’s say you’re an advertising company with 100 samples of a product,” Halappanavar says. “You want to distribute your 100 samples so that your whole network of 100,000 people learns about your product as quickly as possible.” Public officials are faced with a similar problem when they need to decide which at-risk individuals to isolate or how best to distribute a limited supply of vaccine, protective masks, or testing kits.

Through the consortium, Halappanavar and his team, in collaboration with computational epidemiologists at the University of Virginia, Charlottesville, have now received additional time on Summit to explore these problems. Running their state-of-the-art code, they can simulate the outcome of different distribution scenarios within seconds. Although they are currently working with artificial data, their goal is to make the code available for public health and disease control organizations to run with actual data.

Halappanavar says that the consortium motivated him to fast-track his project and to collaborate with people who would be interested in his solutions. Encouraging this kind of interdisciplinary collaboration is a valuable effect of the consortium, Qualters explains. “The scientific community is responding in exactly the way that you might hope.”

–Rachel Berkowitz

Rachel Berkowitz is a Corresponding Editor for Physics based in Seattle, Washington, and Vancouver, Canada.

Correction 25 April, 2020: In an earlier version of the article, Mert Gur's name was spelled incorrectly.