Multi-Echo Imaging

A bat can reconstruct its surroundings by emitting a chirp and listening for the waves that bounce off nearby objects. A new system takes this echolocation to the next level by recording sound or light waves that bounce multiple times off walls and other objects within a room [1]. The technique uses a bare-bones setup with a fixed emitting device and a single-pixel detector. To extract a 3D image from the signal, the system relies on a machine-learning algorithm that is initially trained with images of the room taken with a normal camera. The method could lead to low-profile monitoring systems for home or hospital use.

The concept of “taking a picture” has expanded in the last few years, as researchers have shown that they can unscramble seemingly random wave data to construct an image. Examples include bouncing light rays around an obstacle (see Synopsis: Seeing Around Corners in Real Time) or collecting scattered light from an opaque material (Focus: Reversing Light Scattering with a Handful of Photons). These methods typically use a multi-pixel camera or a light source that moves in some way to create a scan. Now Alex Turpin and his colleagues from the University of Glasgow, UK, have shown that simpler equipment—designed to record multiply bouncing echoes—can produce 3D images of a room.

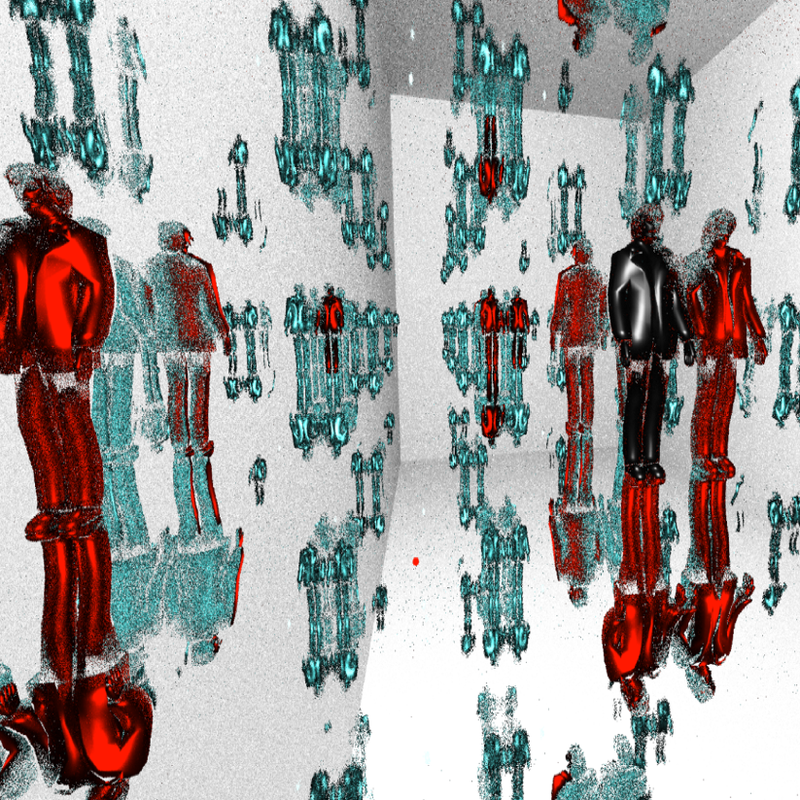

The method involves a fixed source that emits short pulses in all directions and a single-pixel detector. The team tested two separate setups: one using GHz-frequency radio waves and the other using kHz-frequency sound waves. At first glance, it seems impossible to recover a 3D image from the detector, whose output signal indicates just the amount of wave energy striking the sensor at a sequence of times following a pulse. There are many different room arrangements that can produce the series of echoes that the detector records. To break this so-called degeneracy, Turpin and his colleagues took a totally different approach: Rather than use physics principles to determine the 3D structures that would produce the echoes, they employed a neural network, a type of machine-learning system.

The training began with the installation of a 3D (time-of-flight) camera next to the single-pixel detector. Each “true” image recorded by the camera was associated with an echo signal from the detector. The team repeated this process of associating images with echo signals thousands of times as persons and objects moved throughout the room. After this training—which typically took ten minutes to complete—the neural network had a “volumetric fingerprint” of the room, allowing it to instantaneously produce images from echoes bouncing off arbitrary persons or objects in the room, Turpin says.

The team was curious about the number of bounces needed to map out a room with their system, so they performed simulations with various numbers of echoes. “The more paths you have, the more you’ll be able to locate your object and extract information about the object’s shape and orientation,” Turpin says. However, the team found that collecting waves that bounced more than 4 times wouldn’t improve the image by much because the extra reflections don’t contain much additional information.

The echo-based images have very low resolution: you can tell when a person is in the room but not who they are. The technology might be useful in a hospital where the only information you want is whether a person is in bed or moving around. The detectors would work in the dark without violating a person’s privacy, Turpin says. Another advantage is that the technique doesn’t require dedicated instruments—even the radio-frequency antenna in a cell phone could work.

One drawback is that the neural network only works in the room where it was trained. The team is now investigating a system that could be trained on hundreds or thousands of different rooms and other building environments. The device could then operate without training in a room it’s never seen.

Gordon Wetzstein, a computational imaging expert from Stanford University, thinks this new method is exciting. He compares it to non-line-of-sight imaging, in which a hidden object is observed by bouncing laser light off a wall and capturing the reflected light scattered by the object. This paper “shows for the first time that higher-order bounces can be reliably used for these types of applications, significantly improving [the image quality] over existing results,” Wetzstein says. He foresees this technique helping in autonomous driving, which uses reflection-based radar or lidar to detect objects in the road.

–Michael Schirber

Michael Schirber is a Corresponding Editor for Physics Magazine based in Lyon, France.

References

- A. Turpin et al., “3D imaging from multipath temporal echoes,” Phys. Rev. Lett. 126, 174301 (2021).