Unlocking the Hidden Information in Starlight

A provocative new result [1] by Mankei Tsang, Ranjith Nair, and Xiao-Ming Lu of the National University of Singapore suggests that a long-standing limitation to the precision of astronomical imaging, the Rayleigh criterion, proposed in 1879 [2] is itself only an apparition. Using quantum metrology techniques, the researchers have shown that two uncorrelated point-like light sources, such as stars, can be discriminated to arbitrary precision even as their separation decreases to zero.

Quantum metrology, a field that has existed since the late 1960s with the pioneering work of Carl Helstrom [3], is a peculiar hybrid of quantum mechanics and the classical estimation theory developed by statisticians in the 1940s. The methodology is a powerful one, quantifying resources needed for optimal estimation of elementary variables and fundamental constants. These resources include preparation of quantum systems in a characteristic (entangled) state, followed by judiciously chosen measurements, from which a desired parameter, itself not directly measurable, may be inferred.

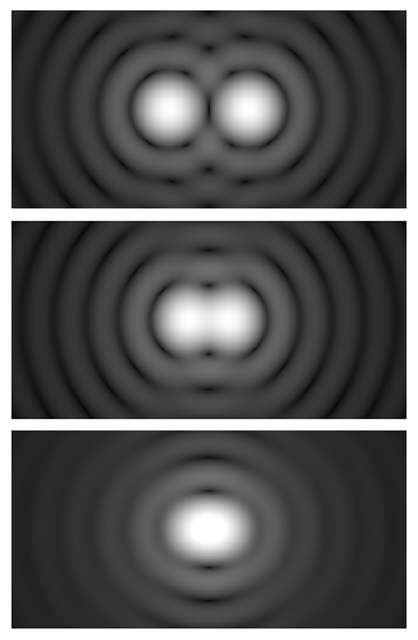

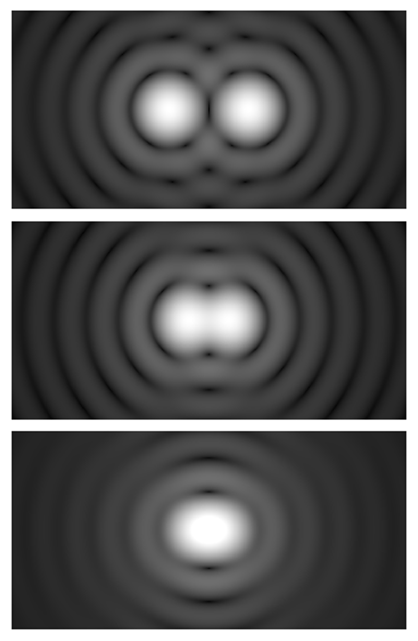

In the context of remote sensing, for example, in the imaging of objects in the night sky, the ability to prepare a physical system in an optimal state does not exist. In the case of starlight, the typical assumption is that the source is classical thermal light, the state of maximum entropy or “uninformativeness.” Imaging such sources is plagued by the limits of diffraction when the objects are in close proximity. The wave-like nature of light causes it to spread as it moves through space, bending around obstacles, for example when traversing a telescope aperture. This results in a diffraction pattern described by a so-called point spread function (PSF) in the image plane. The Rayleigh criterion states that two closely spaced objects are just resolvable—that is, discernable from one another—when the center of the diffraction pattern, or peak of the PSF, of one object is directly over the first minimum of the diffraction pattern of the other. Roughly, the PSF maxima must be farther apart than their widths (Fig. 1).

Some astronomers say they are able to resolve objects that are slightly closer than the Rayleigh limit allows. Yet inevitably, as the angular separation between the objects decreases, the information that can be obtained about that separation using direct detection becomes negligible, and even the most optimistic astronomer, utilizing the most sophisticated signal-processing techniques, must admit defeat. Correspondingly, as the separation approaches zero, the minimum error on any unbiased estimation of the separation blows up to infinity, which has limited angular resolution in imaging since the time of Galileo. Typically, the mean-squared error on the estimation of a parameter scales with the number of repeated measurements or data points, 𝜈, as 1∕𝜈. Even for a large error per measurement, any desired precision is attained by taking multiple data points. When, however, the lower bound on direct estimation of the separation is divergent because of the Rayleigh limit, the 1∕𝜈 factor makes no impact. This is what Tsang and collaborators call Rayleigh’s curse.

Using a quantum metrology formalism to minimize the estimation error, the initial achievement of their work has been to show that there is no fundamental obstacle to the estimation of the separation of two PSFs in one dimension (that is, for sources that sit on a line). As the separation of two PSFs decreases to zero, the amount of obtainable information stays constant. This discovery is nicely summed up by Tsang, who says we should apologize to the starlight “as it travels billions of light years to reach us, yet our current technology and even our space telescopes turn out to be wasting a lot of the information it carries.” [4]

It could be suggested that this is merely a theoretical proof; the quantum metrology formalism indicates that there is always an optimal measurement, which minimizes the estimation error for the separation parameter. Paradoxically, this optimal measurement can, however, depend on the value of the parameter. To obviate such concerns, Tsang and his colleagues propose a strategy, based on state-of-the-art quantum optics technology, that produces a minimal error in the estimation of the separation variable—counterintuitively, this error remains constant for all separation values, under the assumption that the PSFs have a Gaussian shape. The method, which the authors call spatial mode demultiplexing (SPADE), splits the light from the two sources into optical waveguides that have a quadratic refractive-index lateral profile. Mathematically, this SPADE measurement decomposes the overlapping PSFs (a real function in one dimension) into the complete basis of Hermite functions, just as a Fourier transform provides a decomposition of a real function into a superposition of sine and cosine terms. A posteriori, one may be tempted to use intuition to explain why this Hermite basis measurement seems not to suffer Rayleigh’s curse, but then again, were intuition forthcoming, the result may not have been hidden from view for so long. (This elusiveness relates to subtleties in the estimation of a single parameter extracted from the joint statistics of two incoherent light sources.)

One minor caveat of the approach is that full imaging of two point sources at positions X1 and X2 requires estimation of both separation X1−X2 and centroid (X1+X2)∕2 parameters. SPADE is only optimal when the centroid parameter is already known to high precision. Centroid estimation, however, has no equivalent analog to the Rayleigh curse; it may be made via direct imaging. Errors can be reduced appropriately via the factor 1∕𝜈 for data points with 𝜈 much greater than 1.

A second detail worth pondering is that this result utilized techniques from the quantum domain to reveal a classical result. (All of the physical assumptions about starlight admit a classical model.) The quantum metrology formalism has been used to optimally estimate a parameter, but no quantum correlations exist in the system for any value of that parameter, that is, for any angular separation of two stars. When no quantum correlations are present, the formalism will still indicate the best possible measurement strategy and the smallest achievable estimation error.

An added blessing of quantum metrology is that it allows the development of generalized uncertainty relationships, for example between temperature and energy for a system at equilibrium [5], or photon number and path-length difference between the two arms of an interferometer. The result of Tsang and his colleagues can be presented as another type of generalized uncertainty, between source separation and “momentum.” The mean-squared error associated with separation estimation scales inversely with the momentum (Fourier) space variance of the overlapping PSFs.

Regarding impact on the field, the authors’ study produced a flurry of generalizations and other experimental proposals. During the past six months there have been four proof-of-principle experiments, first in Singapore by Tsang’s colleague Alex Ling and collaborators [6], and then elsewhere in Canada and Europe [7–9]. A subsequent theory paper from researchers at the University of York [10] extends Tsang and colleagues’ theory result, which was for incoherent thermal sources such as starlight, to any general quantum state existing jointly between the two sources. This work exploits the roles of squeezing (of quantum fluctuations) and of quantum entanglement to improve measurement precision, extending applicability to domains in which control of the source light is possible, such as microscopy.

Tsang and his colleagues have provided a new perspective on the utility of quantum metrology, and they have reminded us that even in observational astronomy—one of the oldest branches of science—there are (sometimes) still new things to be learned, at the most basic level.

This research is published in Physical Review X.

References

- M. Tsang, R. Nair, and X.-M. Lu, “Quantum Theory of Superresolution for Two Incoherent Optical Point Sources,” Phys. Rev. X 6, 031033 (2016).

- L. Rayleigh, “XXXI. Investigations in Optics, with Special Reference to the Spectroscope,” Philos. Mag. 8, 261 (1879).

- C. W. Helstrom, “Resolution of Point Sources of Light as Analyzed by Quantum Detection Theory,” IEEE Trans. Inf. Theory 19, 389 (1973).

- Private Communication.

- B. Mandelbrot, “On the Derivation of Statistical Thermodynamics from Purely Phenomenological Principles,” J. Math. Phys. 5, 164 (1964).

- T. Z. Sheng, K. Durak, and A. Ling, “Fault-Tolerant and Finite-Error Localization for Point Emitters Within the Diffraction Limit,” arXiv:1605.07297.

- F. Yang, A. Taschilina, E. S. Moiseev, C. Simon, and A. I. Lvovsky, “Far-Field Linear Optical Superresolution via Heterodyne Detection in a Higher-Order Local Oscillator Mode,” arXiv:1606.02662.

- W. K. Tham, H. Ferretti, and A. M. Steinberg, “Beating Rayleigh’s Curse by Imaging Using Phase Information,” arXiv:1606.02666.

- M. Paur, B. Stoklasa, Z. Hradil, L. L. Sanchez-Soto, and J. Rehacek, “Achieving Quantum-Limited Optical Resolution,” arXiv:1606.08332.

- C. Lupo and S. Pirandola, “Ultimate Precision Bound of Quantum and Sub-Wavelength Imaging,” arXiv:1604.07367.