Flocks Without Memory

Researchers use a variety of models to explain the emergence of collective patterns seen in schools of fish, flocks of birds, or swarms of bacteria. Most models assume that velocity alignment of the particles is behind such patterns. This requires the particles to have memory so that they can compute the moving direction of their neighbors. New work, however, shows that a simpler model—based only on instantaneous visual information—can capture many complex features of flocking. The realization that flocking requires fewer ingredients than previously thought may lead to simpler design strategies for robotic devices that mimic natural flocking.

The model developed by Lucas Barberis and Fernando Peruani at the University of Côte d’Azur in France assumes each particle’s motion is influenced by the position, and not the velocity, of its neighbors. The model is based on two principles. Individuals are attracted by particles within their field of view, or vision cone. And attraction only gets switched on if the particles are located within a cognitive horizon—those farther away are ignored.

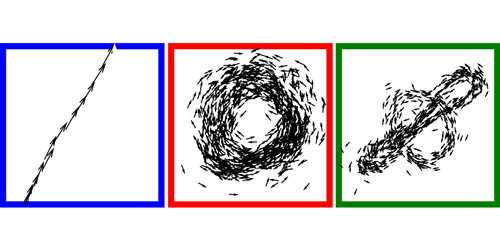

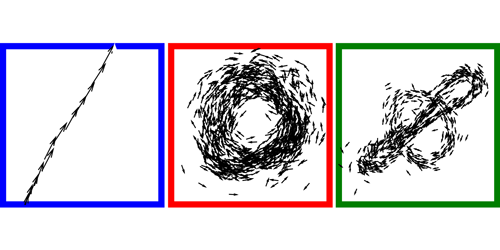

The researchers show that their model can predict the emergence of a wide range of well-known flocking phases: “aggregates” in which the particles gather in clumps or rings; “worms” consisting of single-file queues of particles; and nematic-like phases, resembling those observed in liquid crystals, in which particles move in a series of parallel planes with long-range order.

This research was published in Physical Review Letters.

–Matteo Rini

Matteo Rini is the Deputy Editor of Physics.