Keeping One Step Ahead of Errors

Quantum systems are very delicate, both because they tend to be very small, and thus vulnerable to even tiny disturbances, and because any process that gains information about the state of a quantum system alters it. Nevertheless, quantum coherence can, in principle, be maintained indefinitely using error-correcting codes that shield quantum information from the ravages of a harsh environment. Protected quantum information in turn enables large-scale quantum technologies, such as quantum computers, which can take advantage of quantum interference to solve some computational problems much faster than any conceivable classical computer. One promising approach is to use topological error-correcting codes, which store quantum information safely by associating it with some topological property of the system, such as a path stretching all the way around it. Now, in a paper appearing in Physical Review X, Hector Bombin at the Perimeter Institute in Waterloo, Canada and an international group of scientists from Switzerland, Japan and the US have collaborated to study two families of topological codes and determine how much protection they provide against the most symmetric type of errors [1]. They do this by making a connection between the error-correcting codes and certain purely classical systems. The low-error regime, where the quantum code functions, corresponds to the low-temperature ordered phase in the classical system.

In the first burst of research on quantum error-correcting codes in the 1990s, Dorit Aharonov pointed out [2] that the codes undergo a phase transition between a low-error phase and a high-error phase. When the error rate is low, the error-correcting code can handle errors as they arise, keeping the system close to the ideal state it would have if there were no errors at all. As the error rate rises, it becomes more common for multiple errors to occur at the same time, and the error-correcting code has more and more trouble keeping up. Eventually, the error rate passes a threshold, and errors pop up faster than the code can eliminate them. Below the threshold error rate, the code wins the race, and above the threshold, the errors win. When the code is very large, there is a sharp phase transition between a phase where quantum information can be stored indefinitely and a phase where the information is rapidly destroyed by the errors. The same considerations, albeit with more complicated procedures, apply when the quantum gates used to build the error-correction circuits are themselves imperfect, leading to a phase transition between a phase where large-scale quantum computation is possible and one where it is not.

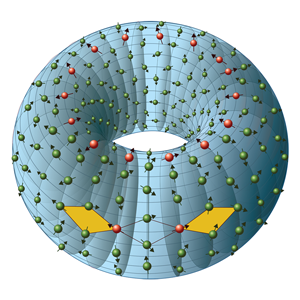

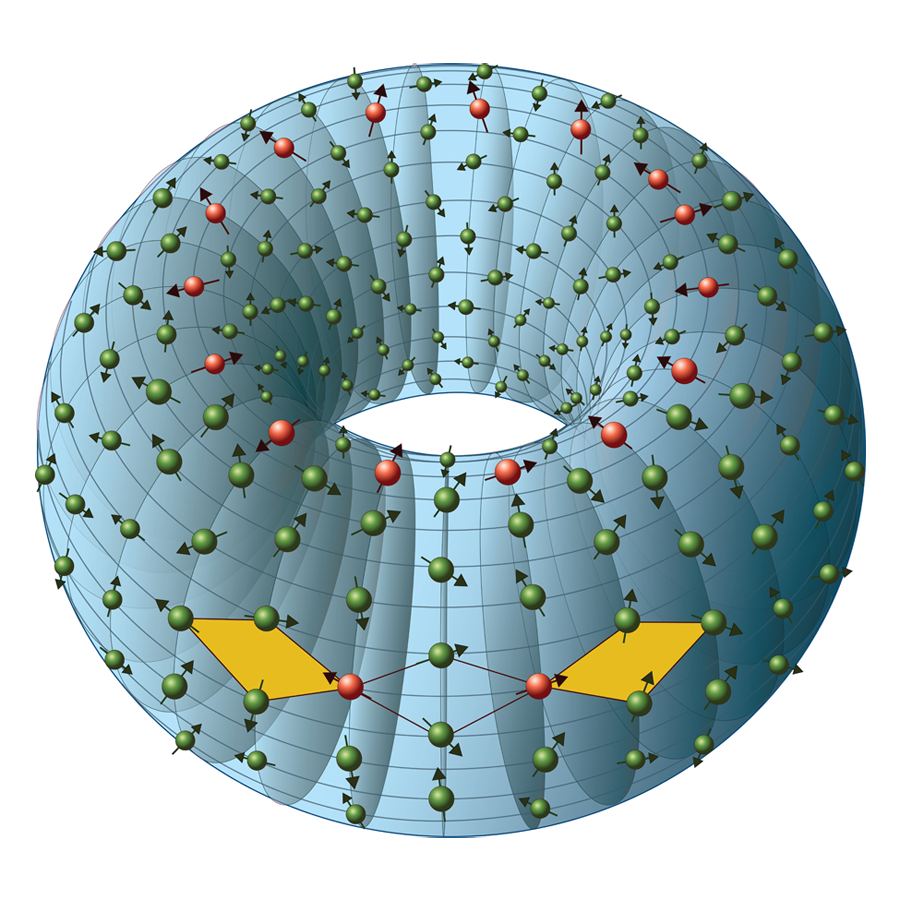

A large number of quantum error-correcting codes are known. Topological codes form one particularly interesting class of codes. The most-studied topological code is the toric code [3], so called because the quantum bits (qubits) comprising it are spread across the outside of a torus (a geometrical object with the shape of a donut). In order to change or access any of the quantum information stored in the toric code, one must manipulate qubits stretching all the way around the torus, either around the ring or through the hole in the center. The code works by enforcing local conditions on the qubits. Any local disturbance of the system will cause it to violate some of the local conditions; only by creating a chain of errors reaching around the torus can the encoded information be changed without revealing the presence of the errors (see Fig. 1). Because their error correction properties depend only on local checks, topological codes are particularly promising for physical realizations, since error correction can be performed without shuttling qubits over long distances.

One approach to understand the properties of topological codes is to find an equivalence between the quantum code and a simpler system. Dennis et al. [4] showed that the behavior of the toric code experiencing bit-flip errors can be subsumed into that of a purely classical system called the two-dimensional random-bond Ising model. This model consists of a lattice of classical spins that can either point up or down. For each neighboring pair of spins, there is a random interaction that makes them either want to line up the same way (ferromagnetic) or to be opposite (antiferromagnetic). When most of the interactions are ferromagnetic and the temperature is low, the spins will mostly line up. At higher temperatures, or when there is a larger percentage of antiferromagnetic interactions, the spins will be disordered, split roughly half and half between up and down.

The toric code corresponds to the two-dimensional random-bond Ising model with a particular relationship between the temperature and the probability that each interaction is ferromagnetic. Having all the spins line up corresponds to successful error correction, whereas a random arrangement of spins corresponds to situations where errors are too common and error correction fails. Thus studying the location of the phase transition between the ordered and disordered phases of the Ising model also tells us the threshold for error correction in the toric code.

However, the connection between the toric code and the random-bond Ising model only applies when the errors are of a specific form, with bit-flip and phase errors occurring independently. The new paper by Bombin et al. [1] demonstrates a similar equivalence when the toric code suffers from depolarizing noise, an error process where all types of single-qubit errors are equally likely. The classical model appearing in this case is a more complicated system called the interacting -vertex model. They also derive a similar result for another family of topological codes called color codes. As before, studying the phases of the classical models reveals the threshold for the quantum codes to correct depolarizing noise. The authors find that taking advantage of the specific properties of depolarizing noise—treating all error types together—allows error correction at a higher rate of error than the more naive approach of treating each type of error separately. This is welcome: Coming up with quantum error-correcting codes that tolerate more errors brings us closer to the challenging long-term goal of building large quantum computers.

Full Disclosure: Though Hector Bombin is a colleague of mine (Gottesman) at the Perimeter Institute, his work on this paper was conducted independently.

References

- H. Bombin, R. S. Andrist, M. Ohzeki, H. G. Katzgraber, and M. A. Martin-Delgado, Phys. Rev. X 2, 021004 (2012)

- D. Aharonov, Phys. Rev. A 62, 062311 (2000)

- A. Yu. Kitaev, Ann. Phys. 303, 2 (2003)

- E. Dennis, A. Kitaev, A. Landahl, and J. Preskill, J. Math. Phys. 43, 4452 (2002)